I’ve spent a long time pondering the notion of measurement through the lenses of both art and science.

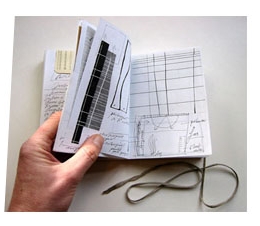

As artist in residence at the Centre for Book and Paper Arts (Columbia College, 2002), a small edition of artist’s books grew out ideas around possible tools and techniques for measuring various solids (pigment…), ephemera (clouds…) and concepts (growth…). Visual cues were harvested from the astounding behind-the-scenes collections in Chicago’s Field Museum. Deep drawers once opened revealed rows of Toucan skulls, mats of dried algae, parcelled rodent skins. Forever (and unfortunately) embedded in my memory is the image of a grinning technician waving around a very large penis bone from an aquatic mammal.

Spool ahead a few years and questions around measuring change form the basis of a questionnaire recently sent out to 540 NZ community groups (See the community questionnaire tab for more details).

In the context of their environmental restoration projects measuring success is gauging whether the effort put into works on the ground (e.g. trapping possums, controlling weeds) really has achieved the desired outcomes. There are two different ways of doing this: using formal (science-based) and/or informal (observation-based) methods. Questionnaire data once analysed, will likely show smaller, less well-resourced community groups with small-scale projects mostly use informal methods. Building up detailed knowledge of their restoration site over time, witnessing the transition from weeds to natives provides them with sufficient evidence to confirm project objectives are being met.

However when science enters the picture via formal monitoring, it is worth reflecting on the relationship between group objectives and the group’s monitoring activities. Is, for example, monitoring numbers of predators or native birds going to tell the group whether they have achieved their objectives… or not? This question is part of a broader dialog around ‘strategic’ monitoring.

I keep on coming back to this: “How can groups ensure that their data are needed, relevant, desired, useable, accessible and timely?” (Weiler, 2007). While it’s not necessarily worth smaller groups’ time and energy to be running formal monitoring programmes, for groups who are carrying out monitoring programmes, who else could be using their hard-won data?

Questionnaire results show that although a number of groups do provide their data to Regional Councils or DOC, only a handful have any idea about how these data are used. I strongly suspect that in many cases the data are not used at all – but that’s to be explored in another post.

Meanwhile, if I am offered anything from a university technician here, it’s not likely to be anything as memorable as the American experience.